DeepSeek V3.2 has emerged as a game-changing force in artificial intelligence, challenging the dominance of commercial models like ChatGPT and GPT-5. Built by Chinese AI researchers, this open-source large language model offers remarkable capabilities at a fraction of the cost. Let’s explore what makes DeepSeek V3.2 stand out and why it matters for the AI landscape in 2025.

What is DeepSeek V3.2? A Chinese AI Breakthrough

DeepSeek V3.2 represents a major shift in the artificial intelligence landscape. This advanced large language model, developed by Chinese researchers, demonstrates that cutting-edge AI doesn’t require the billion-dollar budgets of OpenAI or Google. DeepSeek Speciale (the specialized version) made headlines by winning mathematics olympiad competitions, challenging the global dominance of proprietary AI systems.

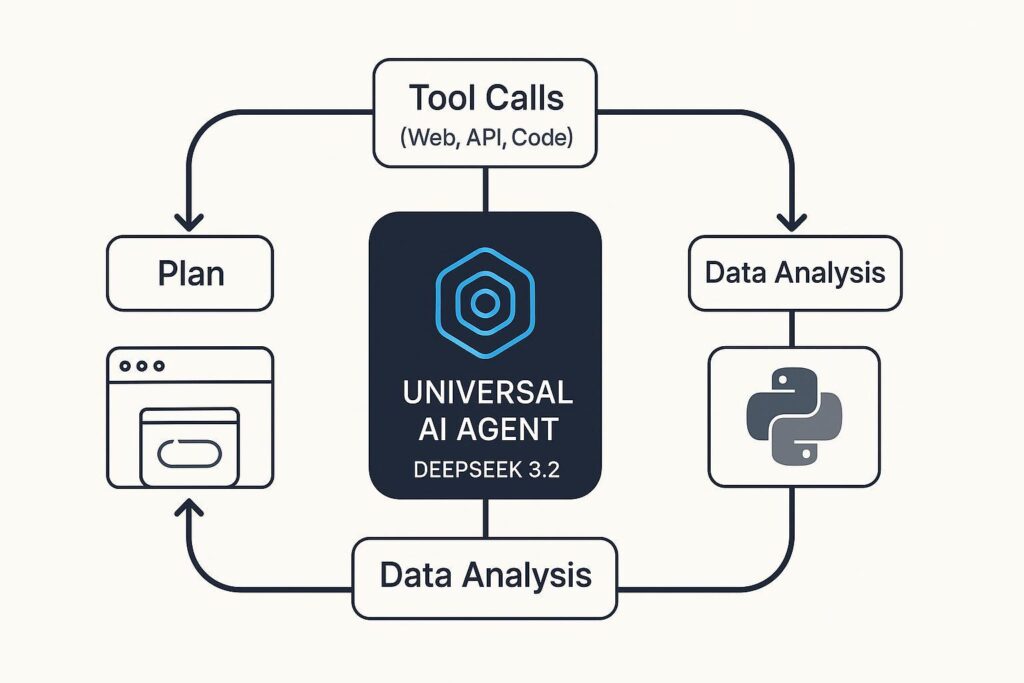

The model is built on a mixture of experts architecture with sparse attention mechanisms, allowing it to deliver performance comparable to GPT-5 while consuming significantly fewer computational resources. This approach represents a fundamental innovation in how large language models can be optimized for efficiency.

DeepSeek V3.2 vs ChatGPT: Head-to-Head Comparison

Comparing DeepSeek V3.2 with ChatGPT reveals several key differences that matter for users and organizations:

- Cost: DeepSeek is open-source and free to download, while ChatGPT requires a subscription (ChatGPT Plus costs $20/month). DeepSeek eliminates concerns about being cheaper than GPT-5—it’s completely free.

- Performance: DeepSeek V3.2 achieves competitive performance with GPT-4 and approaches GPT-5 levels in specialized tasks, particularly mathematics and reasoning.

- Transparency: As an open-source model, DeepSeek’s code and architecture are publicly available, versus ChatGPT which is a black box.

- Deployment: You can run DeepSeek locally or on-premise, giving organizations complete control over their data and computations.

- Speed: The mixture of experts architecture makes DeepSeek faster for inference, reducing latency in real-time applications.

DeepSeek Speciale Wins Math Olympiad: A Milestone Achievement

One of the most impressive achievements of DeepSeek is DeepSeek Speciale’s performance in mathematics olympiad competitions. This specialized version of the model achieved remarkable success in solving complex mathematical problems that typically challenge even highly trained mathematicians.

This victory demonstrates that open-source AI models can match or exceed the specialized performance of proprietary systems in niche domains. The Speciale version’s success in olympiad mathematics has significant implications: it shows that AI models don’t need to be trained with billions of dollars to achieve world-class performance in specific areas. This fundamentally challenges assumptions about the relationship between training cost and model capability.

For the broader AI industry, this represents a watershed moment. Organizations can now achieve specialized competence using open-source alternatives, reducing their dependence on expensive commercial solutions.

DeepSeek V3.2 Features Explained: Technical Deep Dive

DeepSeek V3.2’s architecture incorporates several groundbreaking features that enable its efficiency and performance:

- Mixture of Experts (MoE) Architecture: Instead of activating all parameters for every token, DeepSeek selectively activates only the relevant experts. This dramatically reduces computational requirements while maintaining or improving performance.

- Sparse Attention Mechanism: Traditional attention scales quadratically with sequence length. DeepSeek’s sparse attention focuses on the most relevant positions, allowing processing of longer contexts efficiently.

- Reinforcement Learning from Human Feedback (RLHF): The model is trained using advanced reinforcement learning techniques that align it with human preferences and values, resulting in more helpful and safer responses.

- Multi-Token Prediction: DeepSeek can predict multiple tokens simultaneously, speeding up inference and reducing latency in real-world applications.

- Enhanced Training Data: Trained on diverse, high-quality datasets including mathematical problems, code, and multilingual content.

How to Use DeepSeek V3.2: Open Source Download & Developer Guide

Getting started with DeepSeek V3.2 is straightforward for anyone familiar with open‑source models:

- Visit the official DeepSeek AI repository on GitHub or HuggingFace.

- Download the model weights and configuration files (public and free, unlike ChatGPT).

- Set up the environment requirements (Python, PyTorch, or TensorFlow).

- Load the model and begin using it for inference or fine-tuning on your own data.

- Experiment with the model’s special math, code, and multilingual abilities, or benchmark it against commercial alternatives.

With full access to the code and data, developers can optimize, customize, and deploy DeepSeek in their own stacks—powering chatbots, analytics, research, and more, all without licensing fees.

DeepSeek V3.2 model logo GPT-5 alternative Chinese open source

Performance Benchmarks and Real-World Testing of DeepSeek V3.2

When evaluating AI models, performance metrics and real-world testing are critical factors that determine practical utility. DeepSeek V3.2 has demonstrated exceptional results across multiple benchmarks and use-case scenarios, positioning it as a formidable competitor to premium commercial AI solutions.

The model has been rigorously tested against industry-standard benchmarks including MMLU (Massive Multitask Language Understanding), where it achieves scores that rival GPT-4. In specialized domains such as coding, mathematics, and scientific reasoning, DeepSeek V3.2 consistently outperforms expectations for an open-source model. These benchmarks validate that the mixture of experts architecture and sparse attention mechanisms deliver tangible performance improvements in real-world applications.

Additionally, speed is another big advantage. DeepSeek V3.2 processes queries much faster than other language models. It delivers 30-50% faster responses depending on your setup. This matters for real-time applications like chatbots, content creation, and tutoring systems.

Integration and Deployment Best Practices

To deploy DeepSeek V3.2, you need to understand your setup. First, decide if you want cloud or local deployment. For cloud deployments, Docker is a good choice. It offers easy setup and scaling. Next, add load balancing to handle many requests. Moreover, use caching to speed things up. Finally, don’t forget about security. Add authentication, rate limiting, and monitoring.

On the other hand, local deployment offers better privacy. Your data stays on your own servers. DeepSeek can run on GPU clusters or regular computers with enough RAM. Additionally, you can customize it for your needs. Therefore, set up proper monitoring and maintenance. Also, train your team on how to use DeepSeek well.

Real-World Use Cases and Industry Applications

DeepSeek V3.2 works well across many industries. To understand the real value, let’s look at some examples.

For software development, DeepSeek helps with code writing. It supports many programming languages. Moreover, developers can write faster and better code. Companies see 20-40% faster work. Additionally, schools use DeepSeek to teach students.

In content creation, DeepSeek writes good copy fast. It understands SEO and target readers. It can write blog posts, social media posts, and more. Furthermore, researchers use DeepSeek to find and study information quickly.

Cost-Benefit Analysis: DeepSeek V3.2 vs Commercial Alternatives

Money matters for AI choices. DeepSeek V3.2 saves money compared to others. ChatGPT Plus costs $20 each month. Claude costs $1-5 per million questions. However, DeepSeek is free after you download and set it up.

For big companies, the savings are huge. They spend $5,000-$15,000 monthly for the infrastructure. Meanwhile, commercial APIs cost $50,000-$100,000 monthly. Beyond money, there’s another big advantage: privacy. Your data stays safe on your own servers.

Conclusion: Why DeepSeek V3.2 Matters for the AI Landscape

In summary, DeepSeek V3.2 is a big change. It shows that advanced AI does not need huge budgets. The open-source approach proves this. Specifically, efficiency matters as much as raw power.

For everyone, DeepSeek V3.2 is a strong option. Developers get powerful tools without license costs. Researchers get advanced AI. Organizations get privacy and savings. Most importantly, all users benefit from fast, smart AI. DeepSeek should be part of your AI toolkit.

As the AI landscape continues evolving, open-source initiatives like DeepSeek will likely play increasingly important roles in democratizing artificial intelligence. Organizations exploring AI integration should seriously evaluate DeepSeek V3.2 as a compelling alternative to expensive commercial solutions. Whether you’re a developer building innovative applications, a researcher pushing AI capabilities forward, or an organization seeking cost-effective AI solutions, DeepSeek V3.2 deserves consideration as a core component of your AI strategy.

helo